Why GA4 Data Always Lies

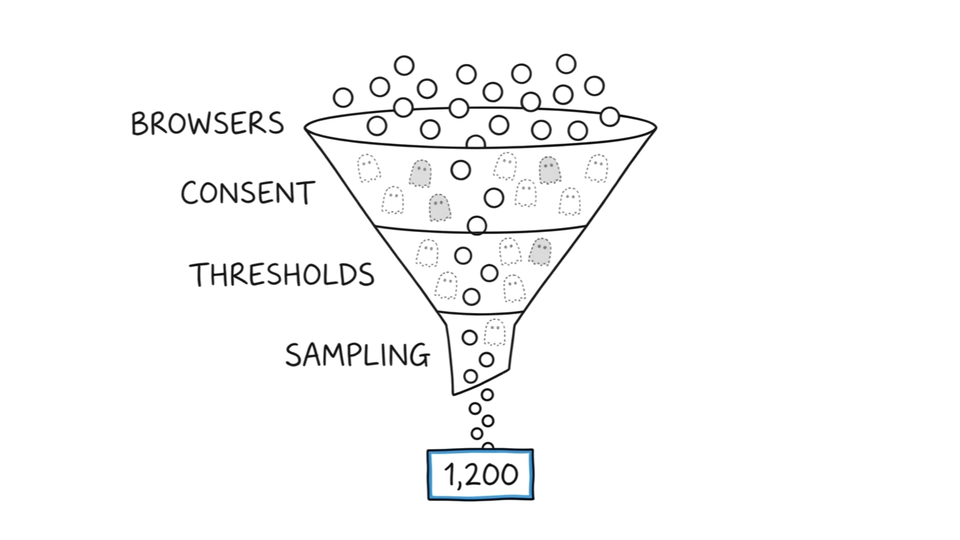

- GA4 data accuracy degrades across four compounding layers: browser blocking, consent gaps, thresholding, and sampling

- Each layer multiplies the previous loss. Some segments lose 40-60% of behavioral data.

- Downstream systems like Smart Bidding and AI tools treat degraded GA4 data as ground truth

You check GA4. The number says 1,200 conversions. Your CRM logged 1,800 leads from the same form. You run the report again. Same number. You check the tag. It fires. You check the filter. It's clean.

The number isn't wrong because something broke. GA4 data accuracy is the anxiety every practitioner carries and nobody quantifies, because the inaccuracy isn't one number. It's a stack.

Where GA4 Data Dies First

GA4 data accuracy fails before your tracking tag fires. The failure starts at collection.

34% of browsers block Google Tag Manager by default, according to WebFX testing in March 2026. Safari. Brave. DuckDuckGo. Not niche browsers. Safari alone holds roughly a quarter of US browser share. These browsers don't block your tag because a user chose to. They block it by policy.

Then come ad blockers. According to a 2024 Ghostery and Censuswide study, 52% of Americans use one. Some of that overlaps with browser-level blocking. Some doesn't. The overlap isn't measurable from inside GA4.

Add cookie restrictions. Safari's Intelligent Tracking Prevention caps first-party cookies at 7 days. A returning visitor who comes back on day 8 looks like a new user. GA4 data accuracy doesn't just lose visitors. It miscounts the ones it keeps.

One in three visitors arrives and leaves no trace. The silence looks like zero. It isn't zero. It's invisible.

What Consent Mode Actually Recovers

Consent Mode is Google's answer to the collection gap. The word "modeling" does a lot of work in that answer.

Consent Mode v2 requires two thresholds before behavioral modeling activates: 1,000 daily users who consented AND 1,000 daily users who denied consent, sustained for 7 consecutive days, per Google's documentation. Most small and mid-size sites never hit both thresholds simultaneously. For them, Consent Mode is a configuration setting, not a data recovery tool.

Even when modeling activates, GA4 data accuracy modeling cannot rebuild what it never observed. Six report categories don't support modeled data: Audiences, BigQuery export, Retention reports, Predictive Metrics, User Explorer, and Sequential segments, per Google's own documentation. According to Plausible Analytics, modeled data cannot reconstruct user journeys, session paths, source attribution, new versus returning status, or time on page.

GA4 data accuracy through Consent Mode is a partial estimate of totals. Not a recovery of behavior. The model knows roughly how many people visited. It does not know what they did.

GA4 data accuracy through Consent Mode answers one question: roughly how many? It cannot answer the question practitioners actually need: what did they do? The gap between "how many" and "what happened" is where reporting decisions break.

How GA4 Accuracy Erodes Layer by Layer

Each layer of GA4 data accuracy loss doesn't add to the previous one. It multiplies.

Browser blocking removes 34% of visitors at collection. Consent opt-outs remove another 20-55% of the remainder, depending on banner implementation. In Andy Crestodina's 33-account study, GA4 underreported by 11.2% without consent banners, 20.3% with passive banners, and 55.6% when banners displayed directly to users, according to Orbit Media's 33-account study. Thresholding hides small cohorts entirely. Sampling estimates large queries from partial data.

Start with 100 visitors. Browser blocking drops the count to 66. A consent banner with a 30% opt-out rate drops it to 46. Thresholding removes any cohort smaller than the privacy threshold. By the time GA4 reports a number, less than half the actual behavior is represented.

A marketer who reads one of these stats thinks GA4 data accuracy is off by 15%. Run the layers together and the compounding reality for some segments: 40-60% of behavior is estimated or invisible.

A marketer who reads one stat thinks GA4 is 15% off. Run the layers together and 40-60% of behavior is estimated or invisible.

The chain doesn't end at the report. Degraded GA4 data feeds Smart Bidding algorithms. It populates automated audiences. It trains AI tools that treat GA4 data accuracy as ground truth. Without complete tracking data, Smart Bidding has less insight into which users convert, leading to higher costs per conversion and lower return on ad spend, according to JumpFly. The algorithm doesn't know the signal is incomplete. It scales whatever it receives.

The stack compounds silently. The dashboard looks normal.

When GA4 Accuracy Matters and When It Doesn't

GA4 data accuracy is directional, not precise. That distinction changes how practitioners use it.

GA4 data accuracy works when you ask directional questions. Is traffic trending up or down? Which channels grow faster than others? Did a site change shift behavior? These questions survive the accuracy gap because they depend on relative movement, not absolute truth.

Absolute conversion counts do not survive. The response to GA4 data accuracy problems isn't "fix GA4." It's knowing which questions GA4 can answer.

- GA4 data accuracy for conversion counts: Trust server-side sources. CRM records, payment processors, and marketing automation platforms count what GA4 misses.

- GA4 data accuracy for attribution: Implement server-side tagging or conversion APIs. These bypass browser-level blocking entirely.

- GA4 data accuracy for small segments: Accept the blind spot. Modeling fails below the threshold. Cross-reference with backend data or remove the segment from GA4 reporting.

GA4 data accuracy works for the questions it was built to answer: relative scale, directional trends, channel comparison. The question isn't "is GA4 accurate?" The question is which decisions need accuracy that GA4 data accuracy cannot provide.

GA4 counts what arrives. Browsers block the arrival. Consent banners limit what's recorded. Modeling guesses what was lost. Thresholding hides what's too small. Every system downstream treats the output as fact.

GA4 data accuracy is not a number to fix. It's a condition to work around. The practitioners who get this right don't chase precision in a tool not built for it. They match the data source to the decision. They stop presenting estimates as measurements.