Judgment Atrophies When You Accept AI

Every time AI hands you an answer, you make a choice about your own capability. You probably don't notice it. But the choice is there.

That hesitation you feel. Should I check this? Do I even know how? That's your expertise sending up a flare.

Most people move on. The choice moves with them.

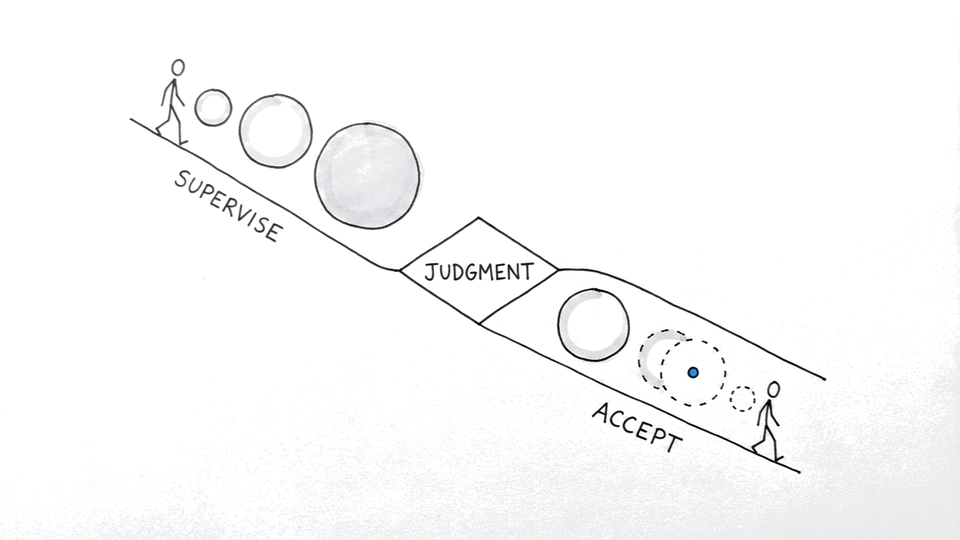

How delegation mode determines whether judgment builds or atrophies

AI-assisted skill atrophy is the hidden tax on senior marketers, when automation handles the judgment calls they used to make themselves. It doesn't show up in a dashboard. It doesn't trigger an alert. It accumulates quietly, interaction by interaction, until the tool is wrong and you can't tell.

When you treat AI output as a proposal, not a conclusion, you stay in the loop. You compare its reasoning to your own model of the problem. You notice when something's off.

You build intuition about where it hallucinates and where it holds. You get reps. When you accept AI output uncritically, you delegate judgment. [Source: Boone et al., Scientific Reports (2020) found that greater lifetime GPS reliance correlated with reduced hippocampus-dependent spatial memory strategies. Navigators who delegated wayfinding to GPS stopped engaging the same hippocampal circuits activated by active navigation. The atrophy mapped directly to whether they exercised judgment or outsourced it.]

And judgment atrophies without use.

Both approaches feel productive. Both reach the destination. The gap stays invisible until it isn't. Until you need to make a call without AI and realize you're not sure you can anymore.

The reference point AI output replaces when you stop maintaining your own

A calculator doesn't erode math ability if you understand the calculation. It becomes a problem when you stop being able to estimate, can't tell if the answer makes sense, and just trust the output.

Same mechanism with AI.

When you supervise AI output, treating each response as a proposal, you compare the AI's reasoning to your own. You notice the gaps. You build intuition about the tool's tendencies.

When you stop doing that, you lose the reference point. The AI's output becomes the standard, because you've stopped maintaining your own.

In every GA4 account I've audited, the marketers who struggle most to catch attribution errors are the ones who outsourced the analysis longest. Not because the tool failed them. Because they stopped building the judgment that lets you know when the tool is wrong.

Why detecting AI errors is the capability that disappears first

The most dangerous moment in any AI workflow isn't when the tool makes a mistake. It's when you can't tell it did. That gap widens every time you skip the evaluation step.

That capability, to evaluate, sense wrongness, and know when you need your own judgment, is what's at stake in every interaction you hand off without thinking. The interface doesn't just produce output. It shapes the muscle you build or lose.

Supervise or accept. Build judgment or borrow it. The choice is there whether you make it consciously or not. More on this in the AI for marketers series.