Why AI Skills Lists Skip Experience

- AI skills lists are built for beginners: prompting, tool fluency, and workflow automation are all learnable in a weekend, none requiring prior domain experience

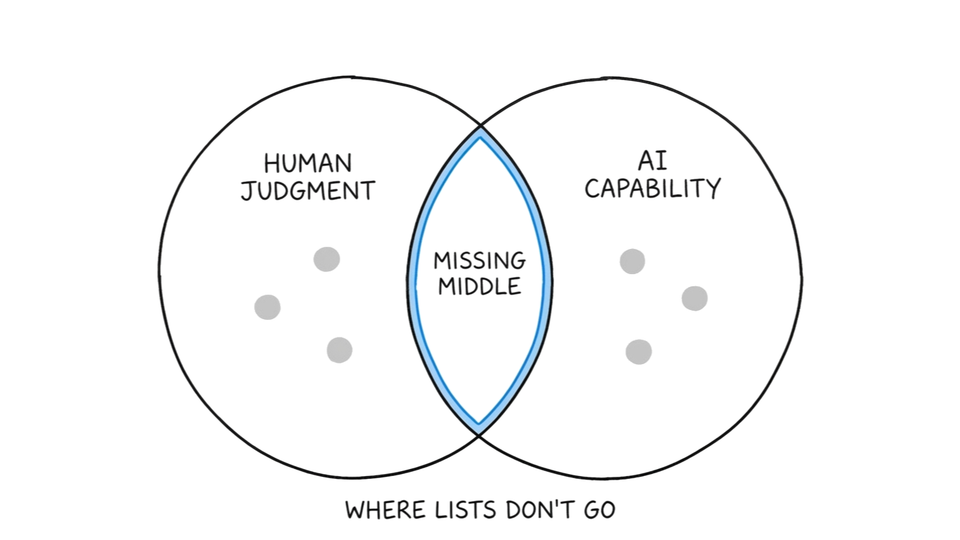

- The missing middle, mapped by Daugherty and Wilson in Human + Machine, is where human judgment and AI capability combine into something neither produces alone

- Senior practitioners need to identify which of three roles they already half-occupy — Trainer, Explainer, or Sustainer — and build deliberately in that direction

The missing variable isn't effort. You tried the tools. Watched the walkthroughs. Sat through the AI certification module.

The outputs were generic. You assumed you were doing something wrong.

You weren't. The problem was where you were standing in the system.

AI skills for marketers only compound when built on the domain judgment AI can't supply. That's the missing middle. Your experience is a moat, not a liability. The lists just never told you that.

AI Skills Lists Reward Beginners, Not Practitioners

Every AI skills list on the SERP covers three things: prompting, tool adoption, workflow automation. All learnable in a weekend. None requiring a day of prior marketing experience.

AI skills for marketers divide into two categories. Surface skills, like prompting fluency, tool adoption, and workflow templates, have no floor. Anyone can learn them. Foundational skills, like training a model on what good output looks like or evaluating AI output against business context, require accumulated domain judgment.

That's not an accident. A March 2026 CoSchedule survey of 900 marketers found just 3% consider themselves AI experts. Every list on the SERP is built for the other 97%.

The problem is that a marketer starting fresh and a 7-year practitioner have completely different needs. One needs to start. The other needs to know where to stand.

Every published list covers the surface category. Almost none covers the foundational one. The gap isn't in the skills. It's in who the lists were built for.

The Missing Middle Is Your Competitive Moat

Human judgment and AI capability each produce something alone. The missing middle is where they combine into something neither produces without the other.

Paul Daugherty and H. James Wilson identified this zone in Human + Machine (2018). The missing middle is not the human doing work AI hasn't learned yet. It's the human providing the context that turns AI output from statistically likely to strategically correct.

Senior practitioners already occupy this zone. They just don't have language for it.

CoSchedule's 2026 survey found 79% of marketers say AI improved how they work, yet every major channel showed declining ROI. The gap isn't between users and non-users. It's between practitioners who can evaluate what the AI returns and those who can't.

That's a judgment problem, not a skills problem. Judgment comes from pattern recognition built over years of campaigns, decisions, and recoveries.

AI skills for marketers compound fastest when built on domain judgment AI can't replicate. That's the actual moat.

Three AI Roles Only Experience Can Fill

The lists describe what these roles involve. What they don't describe is who can actually fill them.

Daugherty and Wilson name three AI skills for marketers built on domain judgment. Trainers, Explainers, Sustainers. Each maps to something senior practitioners already do. None is accessible to someone starting from zero.

Trainers teach AI what good output looks like. They feed examples, label what's correct, set reward signals. That requires taste. Taste requires accumulated judgment about what good means in a specific domain.

A marketer who has written 500 campaign briefs knows what a strong brief looks like. A marketer who has written two doesn't.

Explainers translate AI output into decisions leadership can act on. That requires organizational knowledge. Knowing which stakeholder needs which frame. What the CFO needs to hear versus what the channel team needs to act on.

A tool can't carry that. A 7-year practitioner already does.

Sustainers keep data and prompt systems honest over time. That requires accountability and systems thinking. Knowing what to audit, when to audit it, and what a drift pattern looks like before it becomes a costly mistake.

Trainers, Explainers, and Sustainers are roles that only accumulated domain judgment can fill. Tool fluency gets you to the door. It doesn't get you through it.

What to Build Instead of Chasing Fluency

If prompt engineering is a skill anyone can learn in an afternoon, why does it dominate every AI upskilling plan for senior practitioners?

The certification market has a structural incentive to make tool fluency feel like the scarce resource. It's measurable, teachable, and sellable. The skills that actually compound are harder to package into a course: training judgment, explanation depth, systems maintenance. So they don't appear in the course catalog.

AI skills for marketers split differently at seniority. Entry-level practitioners need tool fluency first. It's the floor. Senior practitioners who already have domain knowledge need a different reorientation: stop optimizing for prompting. Start asking which of the three roles you already half-occupy.

The question isn't "how do I learn AI?" The question is "am I a Trainer, an Explainer, or a Sustainer?" Pick the one that matches your existing domain depth. Build from there.

One precondition: the tools those roles run on need to produce real signal. The AI washing filter is the three-signal test any practitioner can run without a technical audit before committing budget.

You don't come out of this transition ahead by logging the most tool hours. You come out ahead by recognizing early that your domain judgment was the scarce thing all along.

You're not catching up. You're already in the middle of the job the transition is creating.

The question is whether you name it before someone else does.