The Shadow Strategy

Your budget, your calendar, and your promotion list don't lie. Everything else might.

Four Identical Pairs

In 1977, Timothy Wilson and Richard Nisbett laid four pairs of nylon stockings across a table and asked women to choose the best quality pair.

The women examined each pair carefully. They evaluated texture, elasticity, stitching, sheen. They articulated specific, confident reasons for their preferences.

All four pairs were identical.

Same manufacturer. Same production run. Same batch.

No differences existed to evaluate. But the women didn't pick randomly. They overwhelmingly chose the pair on the far right, a position bias the researchers had predicted.

When told the pairs were identical, most women didn't believe it. Their experience of evaluating quality felt genuine. The reasons they'd articulated felt real. They weren't performing for the researchers. They weren't lying.

Their conscious minds had generated a convincing explanation for behavior their conscious minds didn't choose.

Wilson later called this the "adaptive unconscious," the vast processing system that drives most human behavior while the conscious mind watches, explains, and sincerely believes it's in charge. Your conscious mind isn't the CEO making decisions. It's the press secretary writing explanations for decisions already made.

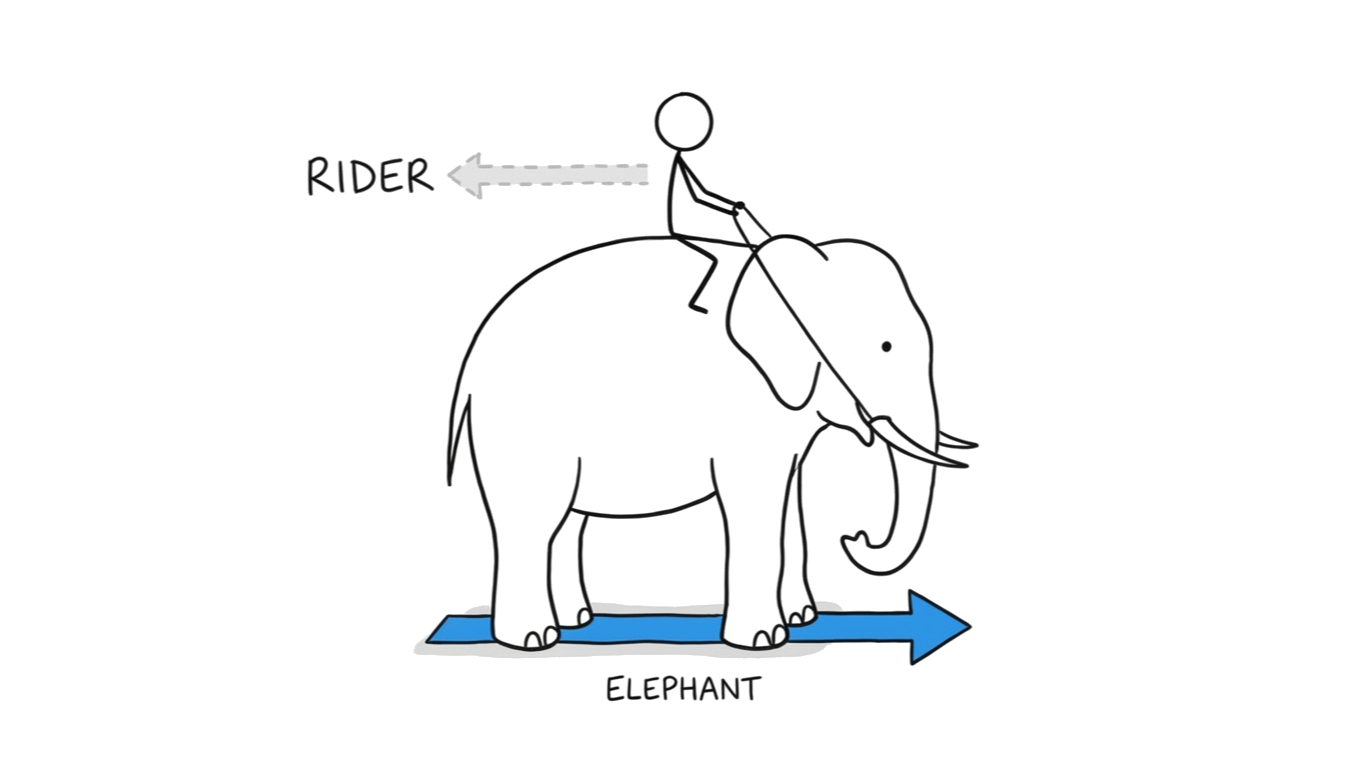

Jonathan Haidt offered a vivid image for the same split: a rider on an elephant. The rider thinks it's steering. It constructs narratives, explains decisions, articulates preferences. But the elephant is much larger. When rider and elephant disagree about where to go, the elephant wins.

The rider doesn't experience this as losing control. The rider experiences it as having made a perfectly reasonable decision, and then constructs an explanation for why this was the right call all along.

In the stocking experiment, the elephant picked the pair on the right.

The rider wrote a detailed review of its texture.

The Quality Gap

If this gap only existed in individuals, it would be a footnote in a psychology textbook.

Organizations have riders and elephants too.

In the late 1980s, Hewlett-Packard's semiconductor division sent a delegation to Japan to study manufacturing quality. HP had rigorous written quality standards. Detailed process documentation. Formal quality review procedures. The delegation expected incremental improvements. A better checklist here, a tighter tolerance there.

What they found shook the division.

Japanese competitors had defect rates orders of magnitude lower. Not 10% better. Not twice as good. Orders of magnitude. The gap between HP's espoused quality standards and its actual manufacturing performance was enormous, and invisible to everyone inside the system until they stood in someone else's factory.

HP's quality manual said excellence. The manufacturing floor produced something else. Not because people were incompetent and not because leadership was lying. The manual described what HP's leadership genuinely believed they were building. The factory floor revealed what the system actually produced.

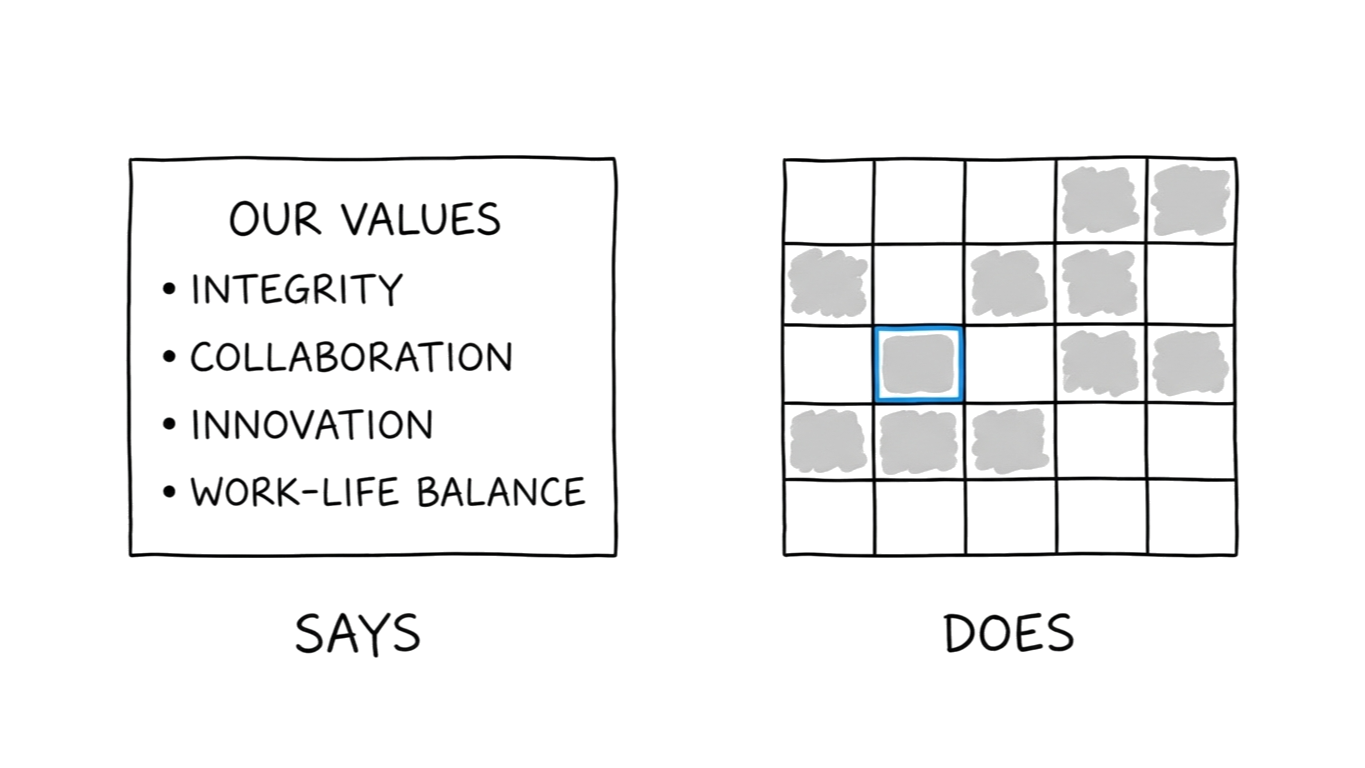

Chris Argyris spent decades studying this exact phenomenon. He called the two sides espoused theory: what a system says it believes, and theory-in-use: what its behavior implies it believes. His finding was devastating: in most organizations, people operating with a gap between the two cannot see it.

Not because they're hiding it.

Because the system that articulates is not the same system that acts.

The gap isn't failure. It's architecture.

The Immune Response

You might expect that once a gap is identified, it gets fixed. The stocking experiment got published. HP learned from Japan.

Most gaps never get identified. They have protection.

In 1847, Ignaz Semmelweis noticed something in the maternity ward at Vienna General Hospital. The ward staffed by doctors had a mortality rate five times higher than the ward staffed by midwives. He investigated and found the cause: doctors were going directly from autopsies to deliveries without washing their hands.

He introduced a chlorine handwashing protocol. A basin of chlorinated lime solution, placed between the morgue and the delivery room. Mortality dropped from roughly 10% to under 2%.

His colleagues rejected the finding.

Not because the evidence was weak. Because accepting it meant accepting that doctors had been killing patients. The medical establishment's self-concept: healers, scientists, professionals, couldn't absorb that conclusion. Semmelweis was dismissed from the hospital, grew increasingly erratic, was committed to an asylum, and died there at 47.

The gap between what the medical establishment said it believed and what its behavior implied had an immune system. When Semmelweis threatened to expose the gap, the system mounted a response proportional not to the size of the problem but to the severity of the threat.

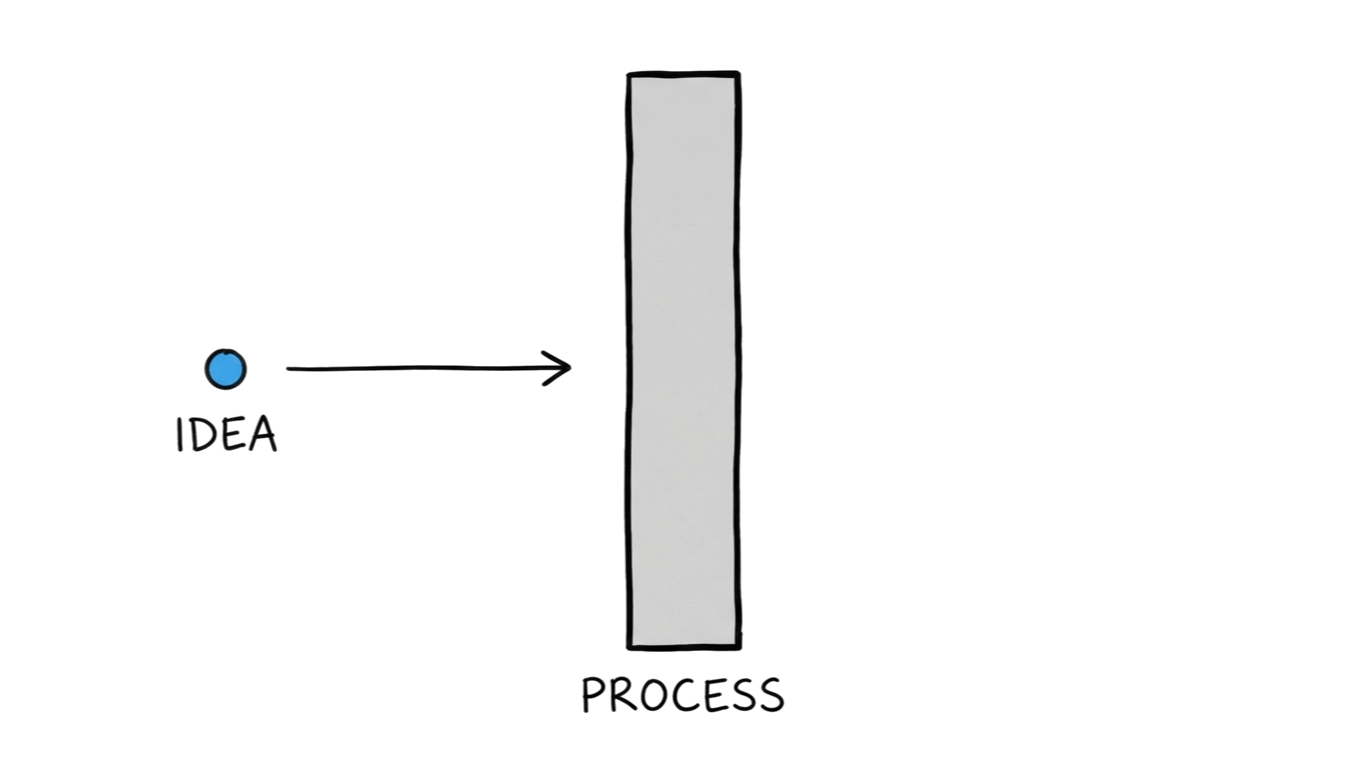

Schein documented the same immune response in corporate settings. At Ciba-Geigy, the pharmaceutical company, he observed a culture that explicitly valued innovation. Senior leadership said it. Strategy documents affirmed it. They meant it.

When junior researchers brought genuinely novel ideas to leadership meetings, the ideas died. Not through dramatic rejection. Through process.

Novel ideas needed sign-offs from committees designed for incremental improvement. They required ROI projections for ventures with no precedent. The formal evaluation system was structurally incapable of recognizing what it claimed to want.

Nobody at Ciba-Geigy experienced this as resistance to innovation. Each requirement felt prudent. Each gate felt reasonable. The immune system operated so smoothly that neither the people defending the gap nor the people blocked by it could name the mechanism.

The defense isn't conspiracy.

It's architecture.

The Factory Floor

If the gap is universal and actively defended, how does it ever get exposed?

Through consequences that overwhelm the defense.

Work-to-rule actions reveal this with surgical precision. Workers don't strike. They do something more devastating: they follow every written rule, every documented procedure, every official process, exactly as written, with zero deviation.

Operations collapse.

When Australian air traffic controllers worked to rule in the 1990s, flight delays cascaded across the country within hours. When Italian railroad workers followed their operating manual precisely, trains ground to a halt. The same pattern has appeared in hospitals, government agencies, and manufacturing plants.

The formal system, the one leadership designed, documented, and espoused, cannot run the operation. The real work happens through informal workarounds, undocumented shortcuts, tacit knowledge that operators carry in their hands and habits. Michael Polanyi called this dimension of knowledge "tacit": what doing knows that saying can't reach.

The espoused system is the map. The real system is the territory. And the territory is far more complex, adaptive, and capable than any map can capture.

Work-to-rule doesn't create dysfunction. It reveals that the espoused system was never the actual system. The gap was always there. The workers' informal expertise was hiding it.

Remove the workarounds, and what remains is only the story the organization tells about itself.

The Drift You Can't Correct

Now you've seen the gap, its defense, and the force that breaks through.

One more mechanism makes the whole thing permanent.

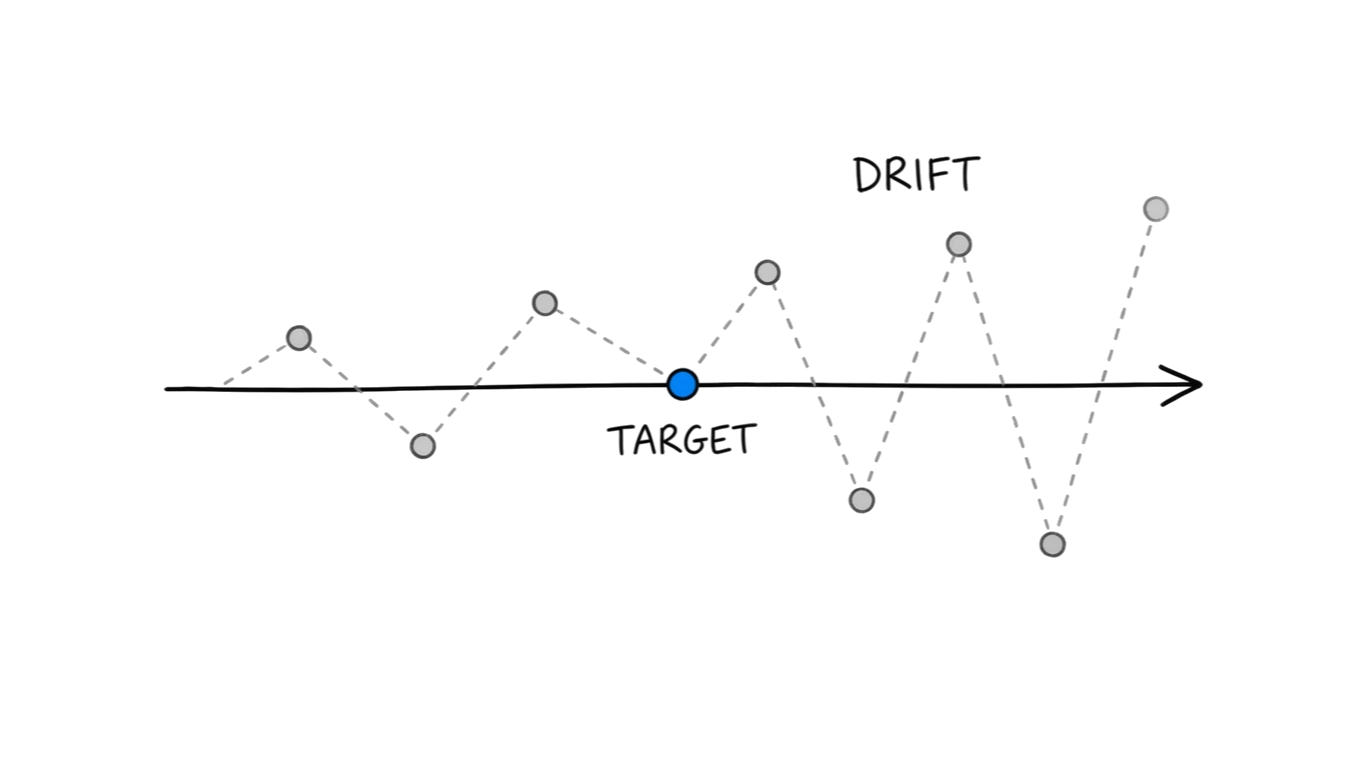

W. Edwards Deming spent his career demonstrating that roughly 85% of performance variation comes from the system, not the individual. He ran a demonstration called the funnel experiment. The setup was simple: drop a marble through a funnel, try to hit a target. Each time the marble misses, adjust the funnel to compensate.

Each adjustment makes the next shot worse.

The system drifts further from the target with every correction. Overcorrection amplifies variation rather than reducing it.

Now picture a quarterly review built on this logic. Miss the target, adjust. Miss again, adjust again. Each fix addresses the last symptom while seeding the next.

The team isn't failing because people are bad. The measurement system is creating the behavior it then measures against.

Claude Steele and Joshua Aronson demonstrated a parallel mechanism at Stanford in 1995. They gave Black and white students the same set of difficult verbal problems from the GRE. In one condition, the test was described as a diagnostic of intellectual ability. In the other, it was described as a problem-solving exercise.

When the test was framed as a diagnostic, Black students solved significantly fewer problems. When the same test was framed as non-diagnostic, the performance gap vanished.

The measurement didn't capture a pre-existing reality. The measurement created the reality it claimed to observe. The frame shaped the behavior that produced the score that justified the frame.

We don't measure what matters. What we measure starts to matter.

Every measurement system that targets individuals instead of systems, tracks outcomes instead of processes, and disconnects the measurer from consequences doesn't just fail to capture reality. It generates new gaps. New blind spots. New splits, that will need their own exposure, their own repair, and their own flawed measurement.

The cycle restarts.

The Engine

Step back far enough and the stories connect.

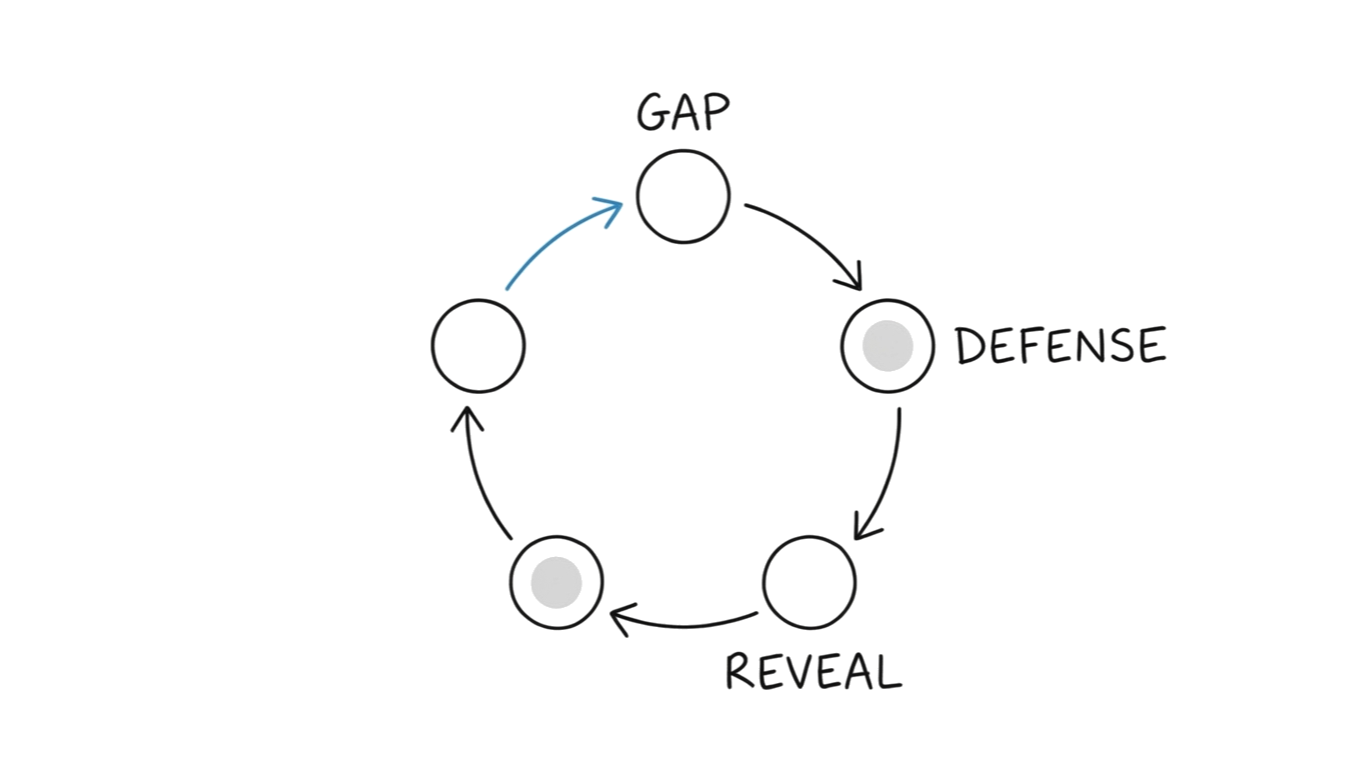

A gap invisible to the self. An immune system that defends it. Conditions that force it into the open. And measurement that creates new gaps from the old repairs.

Gap → Defense → Reveal → Repair → Filter → new Gap.

Not a problem to solve. An engine that runs.

Every organization, every team, every person operates this cycle. The budget says one thing. The strategy deck says another. The calendar reveals priorities the mission statement never mentions. The promotion list rewards behavior the values poster doesn't describe.

This is what I call shadow strategy: the operating system you can't see running. Not because it's hidden. Because the system that looks is not the same system that acts.

Shadow strategy isn't hypocrisy. It's the opposite. Hypocrisy requires awareness of the gap. Shadow strategy exists precisely because the gap is invisible.

The rider writes the press release. The elephant made the decision.

And here is the part that makes the cycle permanent: you can't stand outside it. Your attempt to diagnose someone else's shadow strategy creates your own. The CMO auditing team behavior is performing for the board. The consultant diagnosing dysfunction is simplifying reality in ways that create new blind spots. The CEO measuring culture is producing a measurement artifact that distorts culture.

The cycle is not just organizational. It's epistemological. You cannot observe it without participating in it.

What Survives

The invitation isn't to eliminate the cycle.

It can't be eliminated.

The invitation is to get curious about where you are in it.

You might point to organizations that seem to walk the talk. Toyota. Bridgewater Associates. Companies where espoused and actual genuinely align.

Look closer and the alignment isn't natural. It's engineered.

At Bridgewater, Ray Dalio built radical transparency into the operating system. Every meeting recorded. Every decision documented. Disagreement required. The architecture forces the gap into the open, not once, but continuously.

Toyota's kaizen culture is a formalized reveal-and-repair loop. Problems surfaced daily. Corrections made at the source. The system assumes the gap is always forming and builds infrastructure to catch it before it hardens.

These organizations don't disprove the cycle. They ARE the cycle running fast. And the alignment degrades within a few years if the maintenance stops. When a new CEO deprioritizes transparency, when growth pressure overrides the kaizen ritual, when the cultural infrastructure that forces the gap into the open gets quietly dismantled in the name of efficiency.

The gap is permanent. The speed at which you surface it is the variable.

Your budget tells you what you actually prioritize. Your calendar tells you what you actually value. Your promotion list tells you what behavior you actually reward.

Everything else is narration.

What got cut last budget cycle? That's what's actually dispensable.

Where does the CEO's calendar go? That's what leadership actually values.

Who got promoted? That's what behavior gets rewarded.

What happens under pressure? That's your theory-in-use.

The distance between those answers and the story you tell about them?

That's your shadow strategy.

It's not an accusation.

It's information.

Explore Further

The cycle has five movements. Each has its own deep dive:

Wilson's stockings showed us a gap we can't see from inside. But the gap is only the beginning.

The Sincere Liar — Why the gap between what you think you're doing and what you're actually doing isn't deception. It's architecture. Explores THE SPLIT.

Semmelweis pointed at the gap. The gap destroyed him. Ciba-Geigy's process killed ideas so smoothly that nobody noticed the immune response.

The Immune Response — How the system protects its blind spots from exposure, and why the defense gets stronger with experience. Explores THE DEFENSE.

Work-to-rule proved the formal system was never the real system. But that's only one of three ways reality breaks through.

The Moment It Breaks — Pressure, breach, and method: the three conditions that force truth past the defense. Explores THE REVEAL.

Deming's funnel drifts further with every correction. Steele's frame creates what it measures. But faster loops can shrink the gap.

The Faster Loop — Why learning speed beats planning quality, and how to make the gap visible before consequences force it open. Explores THE CLOSE.

Every quarterly review that adjusts for the last miss is seeding the next one. The measurement isn't capturing reality. It's replacing it.

The Invisible Frame — How measurement systems that target individuals, track outcomes, and disconnect from consequences generate the next shadow strategy. Explores THE FILTER.

Browse all notes: Shadow Strategy →